AI-Generated Audio: Deepfake Impersonates Amy Klobuchar To Criticize Sydney Sweeney

Welcome to your ultimate source for breaking news, trending updates, and in-depth stories from around the world. Whether it's politics, technology, entertainment, sports, or lifestyle, we bring you real-time updates that keep you informed and ahead of the curve.

Our team works tirelessly to ensure you never miss a moment. From the latest developments in global events to the most talked-about topics on social media, our news platform is designed to deliver accurate and timely information, all in one place.

Stay in the know and join thousands of readers who trust us for reliable, up-to-date content. Explore our expertly curated articles and dive deeper into the stories that matter to you. Visit Best Website now and be part of the conversation. Don't miss out on the headlines that shape our world!

Table of Contents

AI-Generated Audio: Deepfake Impersonates Amy Klobuchar to Criticize Sydney Sweeney

A sophisticated deepfake audio clip has surfaced, mimicking Senator Amy Klobuchar's voice to criticize actress Sydney Sweeney's recent comments. The incident highlights the growing concern surrounding the misuse of AI-generated audio and its potential to spread misinformation and damage reputations. This disturbing development underscores the urgent need for improved detection methods and stricter regulations surrounding deepfake technology.

The clip, which quickly went viral on social media platforms like X (formerly Twitter) and TikTok, features a voice remarkably similar to Senator Klobuchar's. In the audio, the AI-generated Klobuchar criticizes Sweeney for unspecified comments, launching into a scathing, albeit fabricated, rebuke. While the exact content of Sweeney's original remarks that prompted this deepfake remain unclear, the incident serves as a stark warning about the ease with which AI can be used to create convincing, yet entirely false, audio recordings.

<h3>The Dangers of Deepfake Audio</h3>

This isn't the first time deepfake technology has been used to impersonate public figures. We've seen similar incidents involving high-profile politicians and celebrities, often used for malicious purposes such as spreading disinformation or damaging reputations. The rapid advancement of AI voice cloning makes it increasingly difficult to discern genuine audio from sophisticated fakes. This poses a significant threat to:

- Political discourse: Deepfakes can be used to manipulate public opinion and sway elections by fabricating damaging statements from political opponents.

- Personal reputation: As seen in this case, individuals can become victims of smear campaigns based on entirely fabricated audio.

- National security: The potential for deepfakes to disrupt markets or incite violence through manipulated audio is a significant concern.

<h3>Detecting AI-Generated Audio: The Challenges Ahead</h3>

Identifying deepfake audio is proving to be a complex challenge. While some advanced techniques are being developed, they often require specialized software and expertise. Current methods focus on subtle inconsistencies in vocal patterns, intonation, and background noise, but the technology is constantly evolving, making detection an ongoing arms race.

Several research groups and tech companies are actively working on improving deepfake detection. This includes developing algorithms that can identify subtle artifacts left behind by the AI generation process, as well as creating more robust watermarking techniques to authenticate genuine audio.

<h3>The Need for Regulation and Public Awareness</h3>

The incident involving Senator Klobuchar and Sydney Sweeney underscores the critical need for stronger regulations and increased public awareness surrounding AI-generated audio. Governments and tech companies need to collaborate to develop effective strategies to combat the spread of deepfakes. This includes:

- Developing robust detection technologies: Investing in research and development to create more sophisticated and accessible deepfake detection tools.

- Improving media literacy: Educating the public about the existence and dangers of deepfake technology to help them critically assess online content.

- Implementing stricter legislation: Enacting laws that hold those responsible for creating and distributing malicious deepfakes accountable.

This latest deepfake incident serves as a crucial wake-up call. The potential for misuse of AI-generated audio is vast and the consequences can be devastating. Only through a combined effort of technological advancement, increased public awareness, and effective legislation can we hope to mitigate the risks posed by this rapidly evolving technology. Staying informed and developing critical thinking skills is crucial in navigating this increasingly complex digital landscape.

Thank you for visiting our website, your trusted source for the latest updates and in-depth coverage on AI-Generated Audio: Deepfake Impersonates Amy Klobuchar To Criticize Sydney Sweeney. We're committed to keeping you informed with timely and accurate information to meet your curiosity and needs.

If you have any questions, suggestions, or feedback, we'd love to hear from you. Your insights are valuable to us and help us improve to serve you better. Feel free to reach out through our contact page.

Don't forget to bookmark our website and check back regularly for the latest headlines and trending topics. See you next time, and thank you for being part of our growing community!

Featured Posts

-

Us Open 2025 Analysis Of The Thrilling Second Round Encounters

Aug 28, 2025

Us Open 2025 Analysis Of The Thrilling Second Round Encounters

Aug 28, 2025 -

Poundland Averted Administration Rescue Deal Secures Future

Aug 28, 2025

Poundland Averted Administration Rescue Deal Secures Future

Aug 28, 2025 -

Public Protest Targets Senator Susan Collins Angry Demonstrators Shout Shame

Aug 28, 2025

Public Protest Targets Senator Susan Collins Angry Demonstrators Shout Shame

Aug 28, 2025 -

Estate Agent Ad Reveals Artwork Missing Since Nazi Looting

Aug 28, 2025

Estate Agent Ad Reveals Artwork Missing Since Nazi Looting

Aug 28, 2025 -

From Crisis To Stability Poundlands Successful Rescue Deal

Aug 28, 2025

From Crisis To Stability Poundlands Successful Rescue Deal

Aug 28, 2025

Latest Posts

-

Showtimes Dexter Original Sin Renewal Reversal Leaves Fans Shocked

Aug 29, 2025

Showtimes Dexter Original Sin Renewal Reversal Leaves Fans Shocked

Aug 29, 2025 -

Mans Comments To Epping Girls Lead To Court Appearance

Aug 29, 2025

Mans Comments To Epping Girls Lead To Court Appearance

Aug 29, 2025 -

Protests Erupt At Maine Ribbon Cutting Event Attended By Senator Collins

Aug 29, 2025

Protests Erupt At Maine Ribbon Cutting Event Attended By Senator Collins

Aug 29, 2025 -

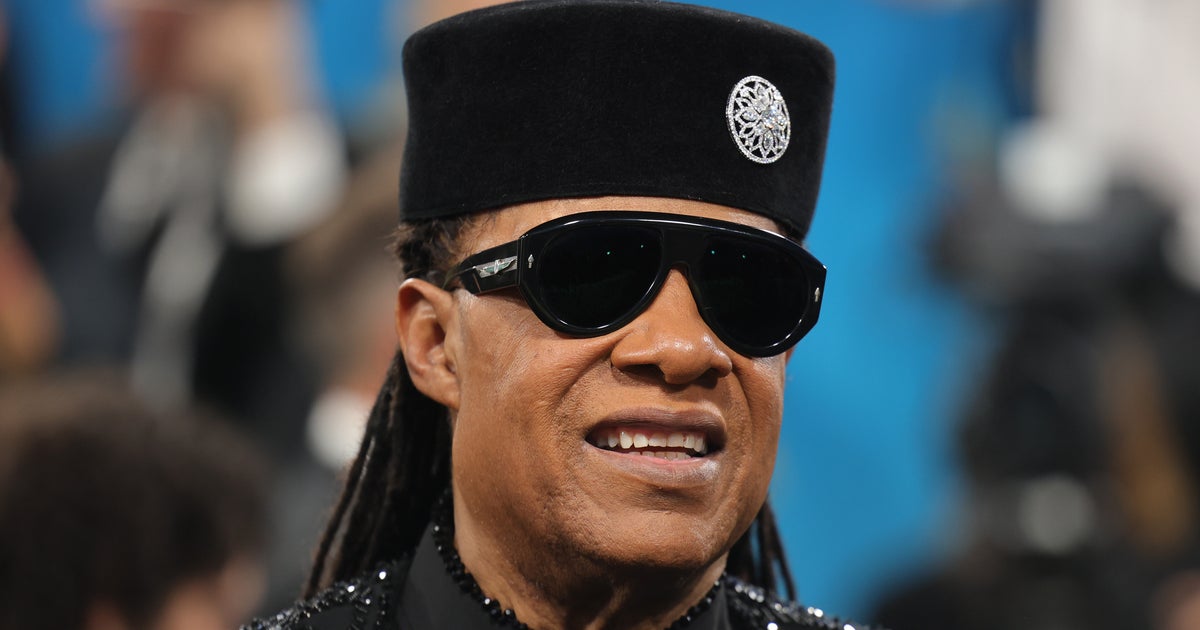

Putting Rumors To Rest Stevie Wonders Definitive Vision Update

Aug 29, 2025

Putting Rumors To Rest Stevie Wonders Definitive Vision Update

Aug 29, 2025